Engineering Optimisation Tutorial 1

Lecture 2

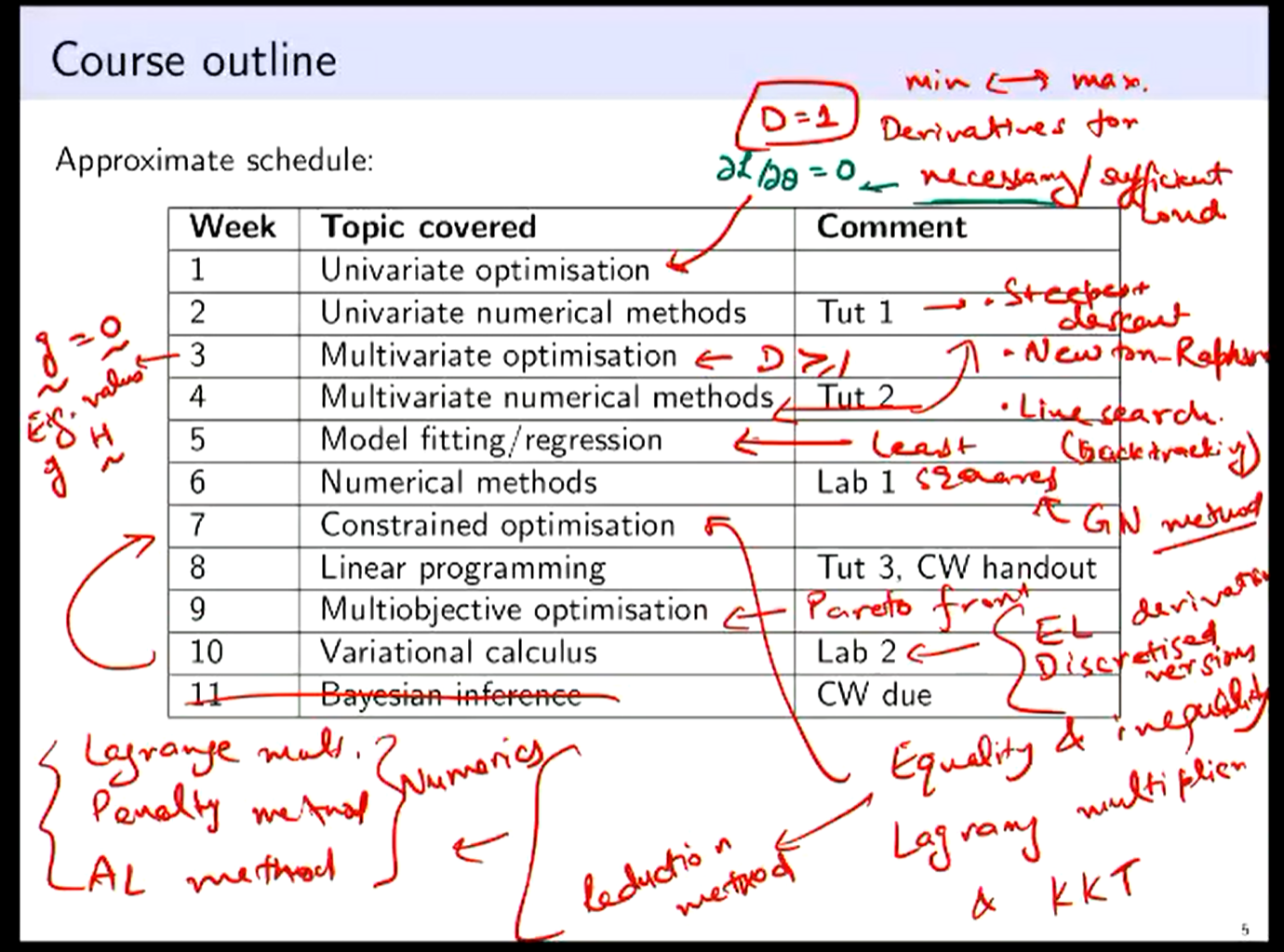

Notes from l1:

- We can approx Hessian with finite diff, such as using secant

For multivariate, where is bold, and we are now considering that and that

Lecture 3

Finding here is called Line Search

Chapter 3 SS Rao Book

Hessian:

4d1 + 2d2 = -1 2d1 + 2d2 = +1 2d1 = -2 ⇒ d1 = -1 ⇒ d2 = 3/2 or

Lecture 4

From the problem sheet

Hessian is symmetric so we need terms, or or

Lecture 5

For least squares we need N (num points) to be greater than or equal to D (the dimension) for there to be unique solutions, at N=D we have always a perfect solution (think a line between two points in 2D)

Gauss Newton always descends, even when Newton doesnt, which is interesting, given that it uses only approximation.

A combo of Gauss Newton and steepest descent is called Levenberg - Marquard method

We can define the “error” by least square as residuals and we try to optimise the objective function based on the residual

Called L2 Norm because we are taking square of the components then summing. Other options:

- L1 Norm:

- L norm:

Outlier can significantly distort the “best” result with the L2 norm. Because we’re squaring the residual, meaning that single outliers skew the data much more

l6

if is singular ⇒ Solution if not unique, therefore it depends on the initial guess.

Even without a perfect fit in N >> D, it will converge in a single step, its dependent on the gradient not the residual. Doesnt mean its a good fit though…

Rosenbrock function as an example for testing minimization algorithms

L_p norm is denoted as In terms of difficulty: Unconstrained Optimisation < Equality Constraint < Inequality Constraint

l7

Constrained Optimisation | \ __ By Reduction? (If Possible) \ __ Lagrange Multiplier (Take it to a higher dimension)

Active vs Inactive constraint, for inequalities, if our solution lies on the constraint (active, its basically an equality constraint, but if it doesnt, its an inactive constraint.

KKT condition is an extension of Lagrange, allowing for uncertainty on whether our constraints are active.

Augmented Lagrange uses both Lagrange and penalty methods

In unconstrained optimisation: we therefore have D “knobs” to turn. Gradient in any direction should be equal to 0 at minimum ⇒

Variational calculus Functional Optimisation

l8

Penalty method

As mu increases, we converge towards 1, but slowly

| Penalty Method | Langrange Method |

|---|---|

| Inexact Solution | Exact Solution |

| Choosing is non trivial | Saddle Problem |

Assume Solve for Update

(1)

Update (2)

For this:

\mathcal{L}_A(\theta, \lambda, \sigma, \mu) &= L(\theta) - \sum_{j=1}^{m} \lambda_j \epsilon_j(\theta) + \mu \sum_{j=1}^{m} \epsilon_j^2(\theta) \\&\quad- \sum_{k=1}^{n} \lambda_{m+k} I_k(\theta) + \mu \sum_{k=1}^{n} \max(0, -I_k(\theta) )^2 \end{align}where m is the amount of constrained conditions, and n is the inequality conditions

l9

Wednesday Mar 19th talks about exam

, used for shorthand for derivate:

Backtracking Question Example

Start at point , and calculate and steepest descent direction

(b) Implement one iteration of backtracking line search with parameters , and to find a suitable step size. Show all iterations of the backtracking procedure. [7 marks]

Alpha too large!

For :

Check Armijo: The condition is still not satisfied. Reduce again: For :

Check Armijo: Since , the Armijo condition is satisfied. We accept .

Question 2: BFGS Method [20 marks]

Consider the function and we are using the BFGS method to minimize it.

(a) Starting with the initial point and initial Hessian approximation (the identity matrix), calculate the first search direction and verify it is a descent direction. [4 marks]

(b) Assuming an exact line search gives a step size of , find the new point and calculate the gradient at this point. [4 marks]

(c) Using the BFGS update formula, compute the new Hessian approximation . Show all steps in your calculation. [8 marks]

(d) Calculate the second search direction using the updated Hessian approximation. [4 marks]

Solution 2:

(a) First search direction

For the function , the gradient is:

At :

With , the search direction is:

To verify this is a descent direction, we check that :

Therefore, is indeed a descent direction.

(b) New point and gradient

Using step size :

Calculate the gradient at :

(c) BFGS update

For the BFGS update, we need:

Now we can use the BFGS update formula:

Calculate each term:

Substituting:

(d) Second search direction

To find the second search direction, we need to solve the system :

We can find by computing :

Therefore:

This is the second search direction according to the BFGS method.